When HR data enters the conversation, the tone changes immediately.

Unlike sales or operations data, HR systems contain highly sensitive information. personally identifiable details, compensation records, performance ratings, health indicators, and compliance-related attributes – you name it. For IT and security teams, the first concern is not dashboard design or analytics speed. It is exposure.

The question usually comes quickly and directly:

“Are you storing a copy of our HR data?”

It is a fair question. The answer affects security posture, data residency obligations, and overall risk surface. Every additional copy of sensitive data expands what must be governed.

Part of the confusion comes from the term “cloud-based.” It can mean many architectural models.

This article explains the difference between live querying, replication, temporary files, and metadata, and what security reviewers should verify before approving HR analytics adoption.

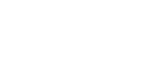

Three Common Architectural Models for HR Data

Not all analytics platforms handle HR data the same way. The phrase “cloud-based” hides important architectural differences. What matters is not where the interface runs, but where the data resides and how it moves.

Model 1: Live Query (No Data Replication)

The analytics platform queries the HR system in real or near real time. Data remains in the source system. Minimal or no employee data is stored elsewhere.

This model reduces duplication and limits exposure. The trade-off is performance dependency on the source system and potential latency for complex analysis.

Model 2: Data Replication

HR data is copied into a warehouse or analytics environment. This enables faster performance, historical modeling, and cross-system analysis. However, it expands the governance surface because sensitive data now exists in multiple locations.

Model 3: Hybrid / Cached Output

Data is queried live, but aggregated or cached results are temporarily stored for performance. Some storage exists, but typically in scoped, controlled form.

What Is Metadata (And Why It’s Not Shadow HR Data)

Many security reviews blur the line between metadata and employee data. They are not the same thing.

Metadata is structural information about the system. It includes table names, field names, data structures, query definitions, and role configurations. In simple terms, metadata describes how data is organized and how reports are built.

What metadata does not include are employee names, compensation values, performance scores, or health indicators. It does not store the actual content of HR records.

Metadata is stored for specific reasons. It preserves report definitions so dashboards can run consistently. It supports access control logic. It enables audit traceability by recording how queries and permissions are configured.

Without metadata, analytics cannot function reliably. With metadata, structure and governance are maintained.

What Actually Gets Stored (If Anything)

When IT asks whether HR data is stored, the right answer requires precision.

Depending on architecture, some limited artifacts may exist outside the source system. These are not full HR datasets, but operational components that support analytics.

What may exist:

- Cached aggregates: Pre-calculated summaries to improve performance

- Temporary query results: Short-lived outputs generated during analysis

- Audit logs: Records of who accessed what and when

- Configuration files: Report definitions and access rules

- User access logs: Authentication and activity tracking

Why they exist:

To ensure performance, governance, traceability, and system reliability.

How long they persist:

Often time-bound and policy-controlled, not permanent replicas.

How they are secured:

Encrypted at rest, access-controlled, and monitored.

How deletion applies:

Retention and purge policies govern lifecycle, including termination workflows.

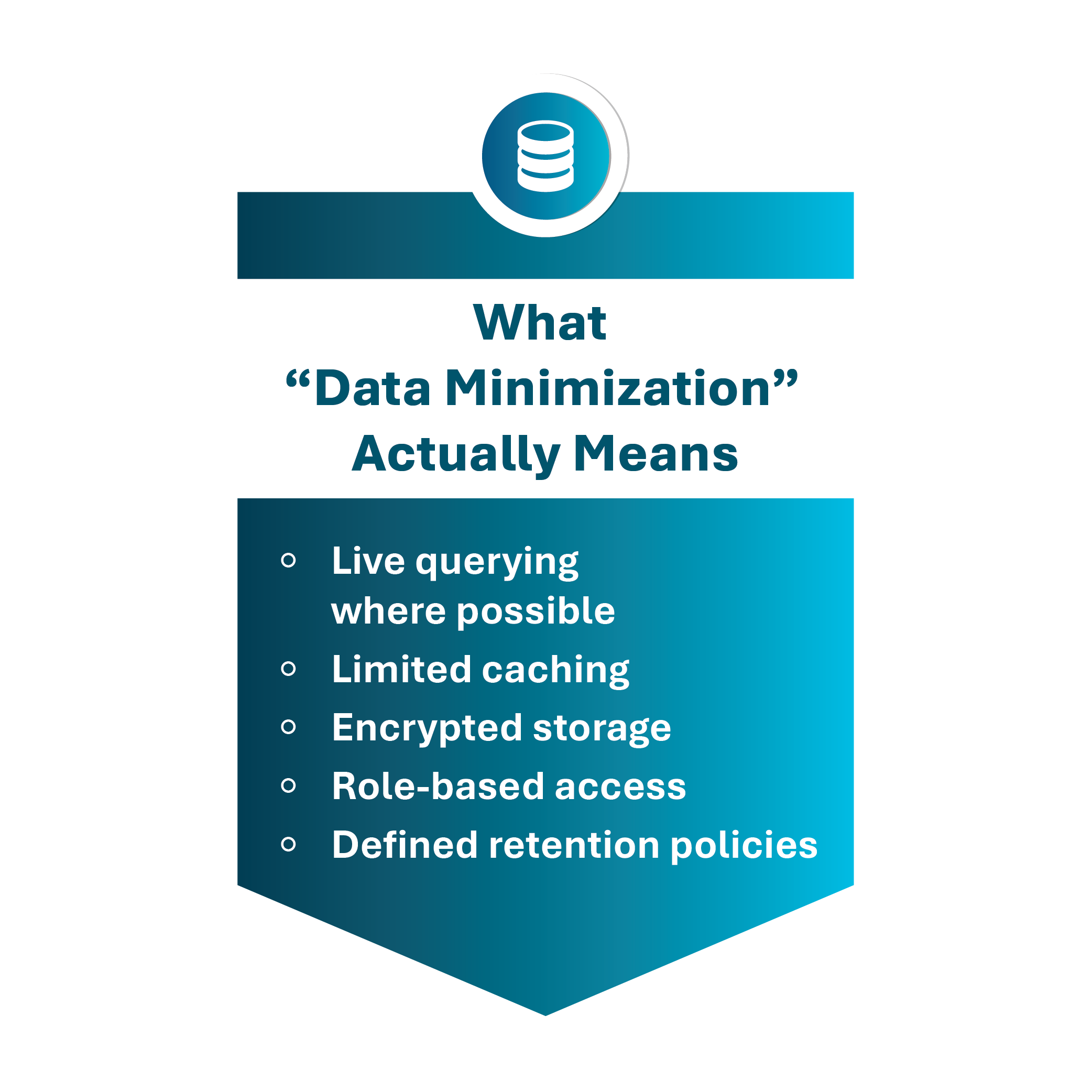

The Real Security Question: Data Minimization

The goal is not to ensure that no data ever touches the system. That standard is unrealistic in modern analytics. The real objective is minimizing unnecessary exposure.

Data minimization starts with least privilege access, where users only see what their role requires. It continues with scoped datasets, so analytics layers do not ingest more HR fields than needed. It includes controlled caching, where temporary results are limited in scope and duration. It requires encryption at rest and in transit, so data is protected wherever it exists. And it depends on access logging, so usage is observable and auditable.

Framed this way, the conversation shifts.

Instead of asking, “Do you store our HR data?” the better question is, “How is data minimized, protected, and lifecycle-managed?”

That question reveals architecture. The first one only reveals fear.

What Security Reviewers Should Ask

Security reviews move faster when the right questions are asked early. Instead of debating generalities, reviewers should focus on architectural specifics.

Use this practical checklist:

- Is data queried live or replicated?

- If replicated, what is the scope and refresh frequency?

- Which fields are cached or temporarily stored?

- How long are temporary query results retained?

- What metadata is stored, and what does it exclude?

- How are cached files encrypted at rest and in transit?

- How is data deletion handled upon contract termination?

- Where are audit and access logs stored?

These questions surface how exposure is managed across the data lifecycle.

Conclusion: Clarity Reduces Fear

HR analytics does not have to mean uncontrolled duplication.

Exposure is determined by architecture, not by the word “cloud.” When data flows are clearly defined, storage is scoped, and lifecycle controls are explicit, analytics and security stop competing with each other.

Transparency reduces friction in IT reviews. Clear diagrams, precise answers, and documented controls move conversations forward faster than generic assurances ever will.

At SplashBI, our architecture is designed with HR sensitivity in mind. Storage is minimal, governed, and purpose-driven. Controls are explicit, not implied.

If you want clarity before commitment, talk to our architecture team for a walkthrough. See exactly how data flows before you sign. The safest analytics environment is the one you fully understand.